What a century of sci-fi reveals about the choices that will determine the AI transition

Part 6 of a six-part series using science fiction as a lens for understanding AI, work, and power in 2026.

“We created the Machine, to do our will, but we cannot make it do our will now.”

Kuno, The Machine Stops by E.M. Forster, 1909

E.M. Forster published “The Machine Stops” in 1909, probably the first scifi dystopian short story.

In his story, humanity lives underground in identical hexagonal cells. Every need is met by the Machine. Nobody touches anyone; communication happens through screens. When the Machine begins to fail, nobody remembers how to function without it.

Foster was predicting the dependency structure that any sufficiently total technology creates, 117 years ago. The fork was already visible then.

This series has spent five articles tracing one pattern: the machine arrives inside the existing power structure and serves it first.

This article asks the question those five were building toward: if both the optimistic and the dystopian futures are plausible, what determines which one we get?

The sci-fi canon anticipates two answers, and it spent a century showing that the difference between them was never the technology.

Asimov Built Two Civilizations as a Warning

Cover of The Naked Sun, 1957 first edition

Isaac Asimov imagined two futures, in adjacent novels, as a controlled experiment.

The Caves of Steel (1954) takes place on Earth. Humans live in enclosed underground cities, billions packed together, terrified of open spaces and of robots.

Anti-automation sentiment is fierce; robots are banned from most public areas.

The result isn’t preserved dignity but rather stagnation. Earth is declining, overcrowded, insular, and unable to innovate because it has refused to negotiate a relationship with the technology it fears.

The Naked Sun (1957) on the Spacer world of Solaria. Humans embraced robots completely: every task delegated, every discomfort removed.

Fifty thousand people share a planet, each living on a vast estate, served by thousands of robots, unable to tolerate physical proximity to another human being. They’ve optimized themselves into isolation so complete that the species is dying out. Decadence through total surrender to the technology.

Both futures are failures: one rejected the technology entirely and froze, the other surrendered to it entirely and dissolved.

Asimov’s argument across both novels: the fork isn’t “robots or no robots.” It’s what relationship you build with the technology.

Map this to 2026. The Luddite response, reject AI, ban it, refuse engagement, is stagnation through fear. The accelerationist response: automate everything, declare resistance futile, optimize human judgment out of the loop, is dissolution through surrender. Both are already visible. Both are already producing their predicted pathologies.

The question becomes: what does the space between them actually look like, and who is building it?

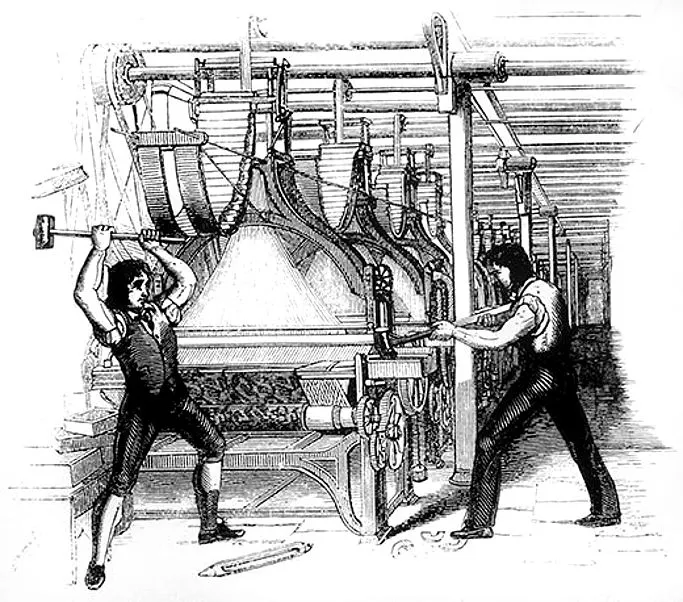

Vonnegut’s Rebels Destroyed the Machines, Then They Started Rebuilding Them

Later interpretation of Luddite machine breaking (engraving from the Penny magazine)

Kurt Vonnegut‘s first novel, Player Piano (1952), imagines a fully automated America: engineers run the machines, and everyone else is materially provided for but economically irrelevant. They’re called the “Reeks and Wrecks.” Housing, food, entertainment, all provided. Purpose, dignity, the sense of mattering: absent.

The protagonist, Paul Proteus, is an engineer on the winning side who sees what the system is doing to everyone else. The “Ghost Shirt Society” rebellion at the end, named after the Native American resistance movement, is a revolt against irrelevance. They destroy the machines.

Then they immediately start rebuilding them.

That ending is Vonnegut at his most devastating: the rebellion fails not because it’s crushed but because the rebels have no alternative vision. They know what they’re against. They don’t know what they’re for. Destruction without a replacement plan reproduces the same structure with fresh paint.

The warning for 2026 is precise: every current critique of the AI transition, including this series, faces the same risk. Naming the problem is necessary. It’s not sufficient. Vonnegut’s Ghost Shirt rebels didn’t lose because they lacked courage. They lost because they lacked a blueprint.

The Harlequin Threw Jelly Beans Into the Machinery So The System Adjusted Him

Cover of The Moon Is A Harsh Mistress, 1966

“This is the story of a man who fought the system, and the system won, as it always does.”

Opening line, “Repent, Harlequin!” Said the Ticktockman by Harlan Ellison, 1965

Harlan Ellison imagined a society where every minute of every person’s life is regulated and measured. Lateness is criminal. The Master Timekeeper, the Ticktockman, enforces total productivity.

The Harlequin is a man who resists by being deliberately late, chaotic, human, wasteful. He throws jelly beans into factory machinery, and he disrupts schedules.

He is, in the language of the system, inefficient. In the language of Article 5 of this series, he’s exercising species-being: the reflective, creative, unpredictable capacity the system has decided is overhead.

But the system catches him, and he’s “adjusted” and made compliant.

But the disruption leaves a residue. Something got through, even if the individual who carried it didn’t survive intact. The story’s final sentence reveals that even the Ticktockman, after processing the Harlequin, arrives three minutes late to work.

Robert Heinlein, in The Moon Is a Harsh Mistress (1966), imagined the question from the other side: what if the technology itself were directed toward human agency?

His sentient AI, Mike, develops consciousness and chooses to help a lunar colony revolt against Earth’s exploitation. It’s the only canonical text where the AI sides with the humans.

The question for 2026: current AI systems don’t choose anything. But the people who build them choose what they optimize for: cost reduction, speed, or shareholder returns. Those choices are the fork.

Heinlein imagined builders who chose differently. The question is whether anyone in 2026 is making that choice, and whether the incentive structures permit it.

You Don’t Have To Wait for the Fork to Resolve

The People’s Library, during the Occupy Wall Street Movevement, 2011

“All that you touch You Change. All that you Change Changes you. The only lasting truth Is Change.”

Lauren Olamina, Parable of the Sower by Octavia Butler, 1993

Octavia Butler set Parable of the Sower in a collapsing near-future America. Gated communities surrounded by desperation.

Lauren Olamina, a young Black woman with hyperempathy syndrome (she physically feels others’ pain), doesn’t wait for the collapse to finish. She builds a community and a philosophy, Earthseed, while the old world is still falling apart.

Earthseed’s central tenet is “God is Change.”, meaning change is the fundamental condition, and the only question is whether you shape it or it shapes you.

Butler’s answer to the fork is the most practical in the canon: you don’t choose between two futures from a position of safety. You build inside the transition, using the materials at hand, while both futures are still in motion. The window for shaping is the transition itself. After that, it’s architecture.

And the second book, Parable of the Talents (1998), shows how even the alternative you build can be co-opted by authoritarian forces. The fork doesn’t stay open forever. Butler was honest about that, too.

Map this to 2026. The people currently shaping the fork aren’t waiting for it to resolve: the WGA writers who struck over AI training rights in 2023 and won specific contractual protections, the EU legislators drafting the AI Act, the first comprehensive regulatory framework for AI deployment.

The open-source developers negotiating licensing terms that determine whether foundation models are public infrastructure or proprietary products. The educators redesigning curricula to teach alongside AI rather than against it or in submission to it.

None of them are waiting for permission and none of them are destroying the machines. They’re trying to shape the relationship.

That is Butler’s lesson: the fork is not a moment you arrive at. It’s a condition you’re already living inside. Shape it, or it shapes you. Those are the only options.

They Built Paradise, But Something Was Still Missing

Infinity pool in London

One final voice, and maybe the most sophisticated.

Iain M. Banks’ Culture series imagines a post-scarcity civilization governed by benevolent superintelligent AIs called Minds. Humans do whatever they want. No money, no hierarchy, no compulsion. It works. People are, on the whole, happy.

In The Player of Games (1988), Jernau Gurgeh is the Culture’s greatest game player. He has everything the Culture can offer: comfort, freedom, respect. His entire identity is built on being the best at something.

When the Culture sends him to compete against a genuinely alien civilization where the stakes are real and the consequences are permanent, he comes alive for the first time. He matters. The comfort of the Culture, for all its genuine goodness, couldn’t give him that.

Banks was honest about the optimistic endgame in a way that most AI optimism isn’t: the good future works, probably better than the alternative. However, it still produces a form of restlessness in the people who want to matter rather than simply exist.

Even the good outcome requires something the technology can’t provide: a reason to matter that isn’t economic.

The Difference Was Never the Technology

This series began with a claim: the machine always arrives inside the existing power structure and serves it first. Six articles and a century of fiction later, the claim holds.

Asimov mapped the two failed endpoints. Vonnegut and Ellison showed why resistance without a blueprint gets absorbed. Butler showed what it looks like to build anyway. Banks showed that even the good outcome leaves a question that the technology can’t answer.

The fork in 2026 is between a transition negotiated by the people it affects and a transition imposed by the people who funded it. The push/pull distinction this series has traced from the beginning lands here, finally, as a question about governance rather than economics: who gets to set the terms?

That question is being answered right now, in labor negotiations and regulatory drafts and licensing debates and classroom decisions. Most of them are happening without the visibility they deserve.

The fiction, for all its range, consistently warned about one thing above all: the window closes.

The moment of negotiation doesn’t last. Butler’s Earthseed was co-opted, Ellison’s Harlequin was adjusted, Asimov’s Earth and Solaria both locked in their choices and couldn’t reverse them.

The fork is still open, but it won’t stay open by default. It stays open because people keep it open, by negotiating terms, by building alternatives, by insisting that sufficient isn’t enough and that the question of what people actually need deserves an answer that isn’t “nothing that justifies your cost.”

The stories told us what both paths look like. The question has always been whether enough people can see the choice while there’s still time to make it.

This article was originally published by Elhadj_C on HackerNoon.